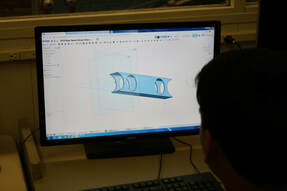

For the past week, the team has weighed pros and cons, finalized CAD designs, and has worked on prototypes for our final robot. Here is an in-depth analysis of what tasks each sub-team completed this week: Elevator: This week, development of the elevator continued as the elevator sub-group completed the CAD for the original design. They identified the faults in the this design and altered it to make one that was simpler to build. What they are going to use in terms of motors, motor controllers, and sensors in order to run the elevator have been discussed and have been given to the programming team. They will therefore be able to start to write the code required to run the elevator even before the team completes the build. Since the rest of the subsystems are either directly tied to or in some way involve the elevator, the team hopes to begin building next week.  Manipulator: This week the group of students working on intake designs for the hatch panels (discs) and cargo (13in balls) continued fleshing out designs that could handle both game pieces. Once the designs were pared down to two, they made drawing sheets (pieces of papers with several views of parts with dimensions). They used these to begin fabricating the designs. The team used the chop saw to cut metal tubing to size and the mill to drill precision holes. After that, the only remaining parts need to be cut our on our CNC router. This is a tool that cuts out complex precision parts using a side cutting drill bit to remove material until the desired shape is cut out. Once these are cut out, we can build both intakes and put them to the test to see which is better suited to the game. This same group of students also worked on our drivetrain construction. They assembled pulleys and gearboxes to spin six wheels with only four motors. Once the drivetrain is complete, and the subsystems are assembled, the team can begin putting the entire robot together.  Software: This week, the software team further developed the TGA User Interface. The app has been designed with tabs/sections for each part of a match: auto (sandstorm), teleoperated, and endgame. The app has been designed to be user-friendly and clearly shows all metrics that demonstrate how skilled a team is. They are working on implementing Arduino (a micro-controller that uses sensory input) and I2C (a processor) with LIDAR. LIDAR is a laser-based vision system that can be used to determine distance away from a distance. In our case, we will use LIDAR to line up with reflective tape that will be on the field. We will use I2C to process the data the LIDAR produces and then send this data to the Rio to tell the robot in which direction and how much to move. They are developing code for the robot subsystems the team expects to use, even though the team hasn’t started fabrication yet. Different classes in the code correspond to different parts of the robot, and the software team is coding what they can without testing directly. They have also began the development of drivetrain code, which is very similar to last year’s.

1 Comment

10/28/2021 09:40:05 am

Very informative post about 3D cad designs.Thankyou for post.Great work.

Reply

Leave a Reply. |

Details

SUBSCRIBE TO OUR BLOG

Email [email protected] to receive an email notification every time a new blog is posted! Archive

April 2024

|